Copyright © 2004 The Shuttleworth Foundation

Unless otherwise expressly stated, all original material of whatever nature created by the contributors of the Learn Linux community, is licensed under the Creative Commons license Attribution-ShareAlike 2.0.

What follows is a copy of the "human-readable summary" of this document. The Legal Code (full license) may be read here.

You are free:

to copy, distribute, display, and perform the work

to make derivative works

to make commercial use of the work

Under the following conditions:

Attribution. You must give the original author credit.

Attribution. You must give the original author credit.

Share Alike. If you alter, transform, or build upon this

work, you may distribute the resulting work only under a license identical to this one.

Share Alike. If you alter, transform, or build upon this

work, you may distribute the resulting work only under a license identical to this one.

For any reuse or distribution, you must make clear to others the license terms of this work.

Any of these conditions can be waived if you get permission from the copyright holder.

Your fair use and other rights are in no way affected by the above.

This is a human-readable summary of the Legal Code (the full license).

2005-01-25 22:35:07

| Revision History | |

|---|---|

| Revision 0.0.1 | 01/NOV/2004 |

| Initial version | |

Table of Contents

- 1. Tour de Shell Scripting

- 2. The Shell

- 3. Regular Expressions

- Introduction

- What are regular expressions?

- The fullstop

- Let's explore "sed" syntax

- Square brackets ( [ ] ), the caret ( ^ ) and the dollar ( $ )

- Using sed and pipes

- The splat (asterisk) ( * )

- The plus operator ( + )

- Returning from detour to our discussion on curly braces …

- RE's are greedy for matching patterns

- Placeholders and word boundaries

- Word boundaries ( < and > ) - a formal explanation

- The tr command

- The cut command

- The paste command

- The uniq command

- The Sort command

- The grep command

- 4. Practically Shell Scripting

- 5. Using Quotation marks in the Shell

- 6. So, you want an Argument?

- 7. Where to From Here?

- 8. Loops

- 9. User input to a script

- 10. Additional Information

- 11. Positional parameters & variables re-visited

- 12. Bits and pieces - tying up the loose ends

- A. Writing html pages

- B. An introduction to dialog

- C. A Comparisson of bash, tsch and ksh

- Index

List of Figures

List of Tables

Table of Contents

This course is structured with the following students in mind:

A "newbie" to shell scripting, OR

A user who wants a refresher on a particular aspect of shell scripting.

For Category 1 users I suggest you work through each section, do all the relevant exercises and Labs.

If you fall into Category 2 above, then just look up the section you need a refresher on, do the examples below each section to drive the point home and move on.

The Lab (project) is designed to get you into the groove of writing shell scripts and is, in essence, an example of the practical application of the shell script.

You will learn an immense amount from doing the exercises and Labs, and you should have a great deal of fun too. In the Labs, we begin by building a really simple script and progress to a script that you will want to show your friends... but don't. Unless they're Linux nuts too, they'll think you're just weird!!!!

Finally, for those geeks out there, (or those of you who think you're too clever for this sort of stuff), there are additional challenge sequences.

For example, where the "wannabe-geeks" build a simple menu system, you must build a menu system with the "ncurses" library. This may mean reading up on the dialog package, figuring out how it works and then implementing it. I have included in the appendices explanations of the challenge sequences. Look out for the challenge sequences and really pull out all the stops!

Oh, a final word of warning. If you haven't noticed already, Unix and Linux people have a pretty wacky sense of humor. I'm no exception. If you find some of my jokes and quips in this course offensive, you're definitely taking this whole Linux thing WAY TOO SERIOUSLY. Take a chill pill and re-read it and relax!

Pencil and Paper

Yes. I know these two concepts are foreign to some of you, but hey, give an old codger like me a break.

To have logged onto your favorite Linux distribution as a user (with your username).

We don't at this stage need to be logged in as root.

![[Note]](../images/admon/note.png) | Note |

|---|---|

At some time during this course you will need to log in as root. If you get to that point and are not the system administrator for the machine you are working on, then you may need to build your very own Linux machine. Any system administrator in their right mind would NEVER give you the root password. Of course, if you are the system administrator, you already have the root password! | |

In order to learn to be a great system administrator, and "shell script-er", you MUST be. . . . .

LAZY.

Yes, that's right, LAZY. Say it, and again. Once more. Good!!!

Why? Because, if you are anything like me, you want to spend your time doing things you love, not the mundane boring stuff that will leave you feeling like you've really had to work hard!

If you're lazy, you will think of simpler, better, faster and more efficient ways of getting through your day's tasks, look efficient, get many pats on the old' back, or taps on the old' head and leave work feeling like you're just the smartest person around.

Next, if you REALLY want to learn to script, NEVER do manually, what you can do by using a script. Script everything!!!!

So, let's get scripting. But first, what is a shell?

The shell, in UNIX and Linux is the equivalent of a command interpreter in Windows. Its job is to accept commands, as typed by the user on the command line, and interpret each of those commands, acting on it however necessary. The shell is a little like DOS operating system in many ways; the only difference being that it's like DOS on steroids. I hope that over the remainder of this course you will you will understand this sentiment.

For example typing:

ls -l

|

on the command line produces some output. How does UNIX know to call the ls command? How does it know to interpret the -l as a switch? What about the output? How does the command output know to come to the screen? By chance? Nope. Nothing in Linux happens by chance!

The shell does these things!

What about a shell script?

A shell script is in essence, a whole bunch of shell commands entered together in a file. A little like the DOS batch file, where many shell commands are grouped together to perform some function(s).

What if we wanted to run two commands over and over again? Say,

free

|

and

df -h

|

One way of doing it would be to type the commands in over and over again. More work!!! Of course it is. We are looking at means of sticking to adage 1.1, not so? So, we could get clever and type both commands on a single line, separated by a semi-colon

free;df -h

|

We've reduced our finger-work, but not by much. Again the better way of doing this is to put both these commands into a file. For our example we will call this file mycmds.sh:

riaan@debian:/tmp> vi mycmds.sh <To create the script>

riaan@debian:/tmp> chmod +x mycmds.sh

riaan@debian:/tmp> ./mycmds.sh

total used free shared buffers cached

Mem: 321628 317836 3792 0 14644 88536

-/+ buffers/cache: 214656 106972

Swap: 506480 1060 505420

file system Size Used Avail Use% Mounted on

/dev/hda1 5.5G 3.5G 2.1G 63% /

tmpfs 158M 4.0K 158M 1% /dev/shm

riaan@debian:/tmp>

|

Then all we have to do it execute it, and voila , we have "created a new command", aka mycmds.sh. Now each time, we merely need to run the script and the commands are executed together.

This is a shell scripting course, but we're going to start off by looking at the info pages, the man pages and the whatis database before we start scripting. This is a good idea because at least we know that we're all on the same page.

So, what is this man page, and info page, and that other stuff you mentioned?

Man pages is a term used as a short-hand for manual pages - or the manual. Info pages, are like manual pages (man), but are a newer format that the movers and shakers are trying to adopt.

Which to use? Well both actually.

In some cases, man does not contain all the necessary information, and then one needs to refer to the info pages. Sometimes it may be far easier to locate that which you are looking for by firing up the manual page. First we'll tackle info pages.

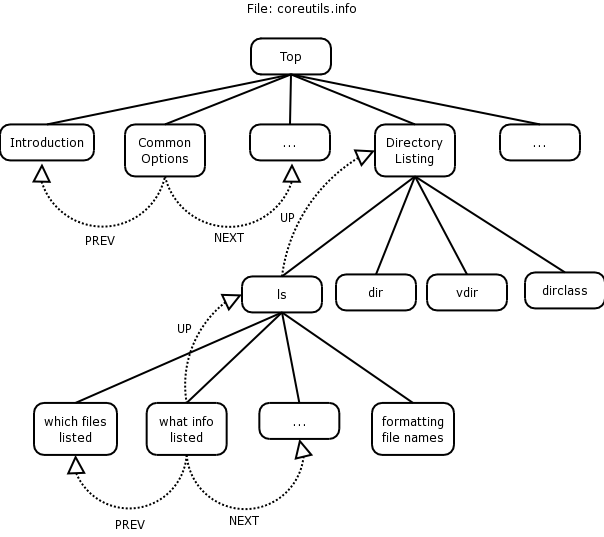

The diagram below illustrates the structure of the info pages. Refer to it while reading this section

Info pages are like man pages in many ways, except they provide a lot more information than man pages (well mostly anyway). Info pages are available on all the Linux distributions currently available, and they are similar to the man pages, although in some instances give you more information.

If we look at a typical way of invoking info, it would be the word info, followed by a space, followed by the name of the command that you want to invoke info on. For example, for the command ls:

info ls

---^-

|

![[Note]](../images/admon/note.png) | Note |

|---|---|

Type the commands just as you see them here. I have placed minus signs under the command and it's arguments, and a caret (^) under the space. This is to illustrate that the command should be typed EXACTLY as is. | |

This should give us an information page on the ls command. We could invoke info on a whole range of utilities by typing:

info coreutils

---^------

|

where coreutils is just another group of info pages. Coreutils is a grouping of info pages, containing commands such as ls, cd, cp, mv or list directory contents (ls), change directory (cd), copy files and directories (cp) and move or rename files (mv). One could, for instance, type:

info mv

|

The way info is structured, is that when you first start it, it will display the node at which you are beginning your search.

In our coreutils example, on the top of the page (right at the top) the first line looks as follows:

File: coreutils.info, Node: Top, Next: Introduction, Up: (dir)

|

Starting on the left-hand side, we see the file that we're "info'ing" the coreutils.info file.

The filename that contains information about the ls, cp, mv and cd commands amongst others is coreutils.info.

The current Node is Top, which indicates that we're at the top of the coreutils info page and cannot go higher within this group of pages.

From the top level of the info page on coreutils we can now do a couple of things:

We can go to the next node (by typing 'n'), which is the next topic, called Introduction. You will notice that there is no link to a Previous topic, since we are at the top of this group of pages.

We could scroll down to the menu and select a particular topic (node) we want displayed.

File: info.info, Node: Top, Next: Getting Started, Up: (dir)

Info: An Introduction

*********************

The GNU Project distributes most of its on-line manuals in the "Info

format", which you read using an "Info reader". You are probably using

an Info reader to read this now.

If you are new to the Info reader and want to learn how to use it,

type the command `h' now. It brings you to a programmed instruction

sequence.

To read about expert-level Info commands, type `n' twice. This

brings you to `Info for Experts', skipping over the `Getting Started'

chapter.

* Menu:

* Getting Started:: Getting started using an Info reader.

* Expert Info:: Info commands for experts.

* Creating an Info File:: How to make your own Info file.

* Index:: An index of topics, commands, and variables.

--zz-Info: (info.info.gz)Top, 24 lines --All-------------------------------------------------------------------------

|

If you were to scroll down to the Directory listing line, you'll see that on the left-hand side there's an asterisk, followed by the topic, followed by a double colon and what is inside the info group:

* Directory listing:: ls dir vdir d v dircolors

|

These are the topics covered in this particular node.

If you hit enter at this stage. You should see that the node has changed. The top line of your page will look as follows:

File: coreutils.info, Node: Directory listing, Next: Basic operations,Prev: Operating on characters, Up: Top

|

This is similar to the top of the coreutils.info page as described above, but this example includes a previous node, which is "Operating on characters", with the next node being "Basic operations".

Once I've scrolled down (using my arrow keys) to * Directory listing, I may want to go and look at information about the ls command to see what I can do with ls. Again you use the up or down arrow key and scroll to "ls invocation" and hit Enter

Once there you can read the ls info page to see what it tells you about the ls command.

How do you go back to the Directory listing info page? Type u for UP, which should take you to the previous listing.

How do you go from "Directory listing" to "Basic operations", when you're currently at the "Directory listing" node? n will take you to the NEXT node (taking you from the "Directory listing" to "Basic operations").

If you want to go right to the top, in other words, back to the coreutils group, press t for TOP.

You can do searches within info by using the forward slash (/) and then include a pattern, which might be something like

/Directory

|

This tells info that you want to look for the pattern Directory. Bingo! We find Directory listing, as it is the first entry that matches the pattern. If you want to use the same pattern to search again, press forward slash followed by enter:

/<ENTER>

|

This will allow you to search for the pattern "Directory" once again. How do you quit info? q will quit info.

If you want to go one page up at a time, then your backspace key will take you one page up at a time.

Finally, to obtain help within info, type '?'. This will get you into the help page for info. To leave help, press CTRL-x-0.

That is essentially how info works. Part of the reason for moving to info rather than man pages is to put everything into texinfo format rather than gz-man format. In the future, much more software will include manual pages in texinfo format, so it's a good idea to learn how the info pages work.

Run info on find.

press u.To which node of the info page does this take you?

Search for the find command.

Select the find command.

If I were to try to find a file using it's inode number, how would I do this. (Hint: search for inum)

What node does this (inum) form part of?

Go to the "Finding Files" node and select the Actions node.

How do you run a command on a single file once the file has been found.

Having covered the info pages, we need to look at man pages since man is the standard on most UNIX and Linux systems. 'man' is short for manual. This is not a sexist operating system. There are no woman pages but we can find out how to make some a little later (to keep man company).} Manual pages are available on every operating system. (If your system administrator hasn't installed them, ask him politely to do so, as no Linux system should be running without man pages.).

The man command is actually run through a program called less, which is like more except it offers more than the more command does.

![[Note]](../images/admon/note.png) | Note |

|---|---|

Mark Nudelman, the developer of less, couldn't call it more, since there was already a more command, so it became less. Linux people do have a sense of humor. | |

To invoke man pages type:

man <command>

|

For example, the ls command that we info'ed above,

$ man ls | less

|

Looking at our example above, the manual page on the ls command is run through the less command.

What can you do from within man?

Well, pretty much the things you can do with info. Instead of a menu system, and nodes, we're looking at a single page detailing all the options and switches of the ls command.

If we want to search for a particular pattern we would use forward slash (/) just like we did in info.

For example, we could type

/SEE ALSO

|

This pattern search would take us to the end of the man page to look at the SEE ALSO section.

We could type question mark with a pattern, which does a reverse search for the specified pattern. Remember forward slash does a forward search and question mark does a reverse search.

?NAME

|

This pattern search will reverse search up the man page to look for the pattern NAME.

![[Note]](../images/admon/note.png) | Note |

|---|---|

You will notice that I'm not saying look for the string NAME, rather I'm saying look for the pattern NAME. This is because pattern matching is a critically important part of UNIX and Linux and shell scripting. We'll learn more about patterns as we go through the course. | |

If we want to scroll down one page at a time within the man page (i.e. we've looked at page 1 and we've read and understood it, and we want to go to page 2), then the space bar takes us forward by a page.

Similarly if we want to reverse up the man page, we press b for back, which will scroll backwards through the man page.

How do we get back to our prompt? The 'q' key comes in handy again. 'q' for quit.

man pages are generally broken down into a host of different sections. There's a SYNOPSIS section, a DESCRIPTION section, and a SEE ALSO section. Read through the man page and you will understand the different sections.

If you need help on moving around through the man page, type 'h' for help, which will give you a listing of all the help commands. You will see that it has displayed the help commands NOT for man but for less. Why? Because the pager for man, (pager, the tool that gives you one page at a time instead of just scrolling the man page past you too fast to read), is the less command

We will cover the less command a bit later but you can look it up with the info pages as follows:

info less

|

So 'h' within the man page will show you help on the 'less' command at the same time as displaying the requested manual page.

Sometimes you need to read a man page three or four times before you completely understand it, and of course sometimes you may never understand it! Don't be deterred. That's what separates the kanga's from the roo's.

do a man on the nl command

What is the function of this command?

How would one right justify the number ensuring it has leading zeros?

And also number non-blank lines?

Who wrote this program?

What else should we view to get a complete picture of the nl command?

What version of the nl command do you have installed on your system?

The whatis database is usually rebuilt on a Linux system at night. The job of the whatis database is to search through every manual page on your system looking for the NAME section within these man pages, classifying them and placing them into a database, to facilitate searching in the future.

The whatis database is useful in that it gives us the ability to quickly look up what a particular command does. So if I asked you to tell me what the nl command does, you could look it up in the man pages or you could look it up in the whatis database.

man nl

|

or

whatis nl

|

The latter method should return with the NAME section from the man page, showing you what the commands job is on the system. It should tell you that nl numbers the lines. Similarly wc counts words, lines and characters for you.

The whatis database is very useful because it allows you to quickly find out, what a particular command on the system does.

If the whatis database is not up-to-date, it is quite simple to update it. Generally though, the updating of the whatis database is a simple automated process. Once a night, the operating system should go about updating the whatis database. Even if the system administrator has installed a whole host of new software on the system, by virtue of the fact that the man pages for that software would be installed at the same time as your new application is installed, your whatis database should pick up those pages and add them to its database each night.

As a side note, updating the whatis database manually is simply a matter of

$ makewhatis -u -w

|

and the whatis database will be updated.

The idea behind being lazy is that you want to take a system and get it to do stuff automatically for you, so that you can spend more time surf-skiing or walking on the mountain, or doing things you enjoy doing.

Now people say to me "Why must I be lazy?"

Because it means that you need to think of better, quicker ways of doing things and shell scripting is a way to achieve that.

If you haven't thought of a better way of doing it, you're not applying your mind. If you apply your mind you will find that there are many different ways to skin a cat in Linux. Shell scripting is one of the many ways you can speed up mundane tasks.

So the idea behind shell scripting is to automate this process of getting jobs to be done on your behalf.

To achieve this using scripts, you could take a series of system administration tasks, put them together in a single script, run them unattended and they should produce output that would (hopefully) match what you require.

Finally, this brings me to another adage.

Addage 1.3

There is never only one way of solving a problem in Linux. The way I solve a problem may be completely different to the way you solve it. But does this matter? Absolutely not! Is my solution better than yours? Perhaps, but I have been doing this for a long time, so take note of how I do it.

There are some basic commands that we are going to look at. The idea is to get you into the process of understanding how commands are structured and build an understanding of what the commands do.

From hereon out, I'm going to assume that you can find out lots of things about commands primarily by looking at info and man pages.

Almost every Linux command can be run from the command line using various switches (or arguments / options) which allow one to change the output of this command in a number of different ways.

The who command is designed to tell you who's logged on to the system.

If we run the who command without any switches, the left hand column shows the user id. This the user currently logged on to the system. In your case, you might be logged on as root, or perhaps as your user. The second column indicates where you are logged in.

riaan@debian:~> who

riaan :0 Apr 30 11:13 (console)

riaan pts/0 Apr 30 11:13

riaan pts/3 Apr 30 11:14

riaan pts/4 Apr 30 11:30

riaan pts/5 Apr 30 13:19

riaan pts/6 Apr 30 12:07

riaan pts/7 Apr 30 12:09

riaan@debian:~>

|

So if you look at the who command output, my user riaan is logged in from :0 which is the X console. He's also logged on to

pts/0 and

pts/1

|

These are pseudo terminals, indicating he's also logged into two pseudo terminals.

The final, third column indicates what time the user logged on.

The who command tells us about users using our system. That's great!

What are the other switches that we can use with who.

who --help

|

This will show you the various switches that we can use with the who; command. So if we use a:

who -H

|

it prints a heading line for us. The output should look as follows:

$ who -H

NAME LINE TIME FROM

heidi ttyp1 Nov 27 17:29 (168.210.56.177:S)

mwest ttyp2 Nov 10 15:04 (apotheosis)

heidi ttyp4 Nov 11 13:18 (168.210.56.177:S)

|

To view a short listing which is the default listing for the who command:

who -s

|

Using the -u switch:

who -u

|

will show the users and their process id''s.

In scripts, one can use the same commands as on the command line, including all the switches those commands use. One can run any command and produce standard text output, which one can use. We'll talk about how you can use the output later.

Run the command

$ who -u

root tty2 Aug 4 10:41 . 2839

riaan :0 Aug 4 07:53 old 2836 (console)

|

to identify which users are logged into your system and from which processes they are logged on.

This will show how long a terminal has been idle. It will show not only which users are logged on and what process ids they are but also how long that user has been idle. Idle users might have gone out for lunch or they might have left for the day. In default mode, most of these systems don't log you out if you're idle for longer than 10 or 15 minutes. In the old days, most systems were configured to automatically log you out after 15 minutes.

![[Note]](../images/admon/note.png) | Note |

|---|---|

On Debian, the -i switch does not add any extra output, it simply prints a message suggesting that you not use -i as it will be removed in future releases. Use -u as above. However the -i switch may work with other brands of Linux. | |

Okay, so that's the who command. We're going to use these commands later to build a system to monitor our system automatically, because we want to be spending our time doing things we enjoy.

How many users are currently logged onto your system?

Is any user logged onto your terminals tty1 -> tty6?

Who (which user and or terminal) has been idle for the longest time?

Ensure that all output is displayed with a heading line.

What run-level are you currently running in and what was your previous run-level? What other command will show you this information?

What would you imagine a users message status would be? (Take a guess or read the man page for write)

What does the w command do? You could run:

riaan@debian:~> whatis w

w (1) - Show who is logged on and what they are doing.

riaan@debian:~>

|

riaan@linux:~> w

21:40:17 up 11:03, 6 users, load average: 0.30, 0.34, 0.30

USER TTY LOGIN@ IDLE JCPU PCPU WHAT

root tty2 21:40 8.00s 0.06s 0.06s -bash

riaan :0 10:38 ?xdm? 58:31 0.04s -:0

riaan pts/0 10:38 11:01m 0.00s 2.08s kdeinit: kwrited

riaan pts/3 11:18 10:22m 14:37 2.63s /usr/lib/java/bin/java -mx32m -jar /home/riaan/jedit/4.2pre9/jedit.j

riaan pts/4 11:28 1:07m 0.21s 0.21s /bin/bash

riaan pts/5 11:28 0.00s 0.17s 0.03s w

|

which should print some information about the w command.

The w command not only tells us who are logged in, but also what they're doing. Are these users running applications? What actual processes are they running at this time? Perhaps someone's running an application like OpenOffice. w will tell us this.

If you look at the output of this command, it's got a list of headings that are fairly similar to the format of the who command.

Later we'll have a look at modifying the report columns, to get the output into a different format that may be more useful.

One of the reasons for taking you through these commands is that we're going to start writing our first shell scripts using these commands, so it is as well that we understand them now.

The date command is a useful command that can do all sorts of nifty things for us (apart from printing out the date).

It can convert between Unix time, (which is the number of seconds since 1/1/1970 - commonly known as the epoch) and a human readable (normal) date and time.

Conversely, it can also convert back from date time today to the number of seconds that have elapsed since the 1/1/1970. It can format output in a whole variety of ways. Let's look at some examples of the date command.

For that I'm going to do:

info date

|

If you scroll down, you will see a section with examples. Looking at the example:

date +" "

|

We may now include a string describing the format inside these quotation marks.

In the shell there's a big distinction between double quotes, single quotes (which is another lesson altogether, see Chapter 5), and back quotes - let's not get them confused for now.

Within this double quoted string we can include any number of arguments. What arguments can we include? Each argument starts with a percentage sign.

To display the time, we could use:

%H - -will give us the hours in 24 hour format (0-23).

%M - -will give us the minutes (0-59) of the day

|

If we had the following string:

date +"%H:%M"

|

we will end up with hours and minutes of the day on our system. The result of the above command should be similar to:

15:04

|

But let's say that we want the hours in 12-hour format rather than 24-hour format. We could then replace the %H with a %l. The result would then be:

3:04

|

There's a host of other things that we could do. For example if we are in 12-hour format, 3:04 doesn't indicate whether it is morning or afternoon. Thus we could include %p:

date +"%l:%M %p"

|

This would show us that the time is actually:

3:04 PM

|

rather than 3:04 AM.

That's for time, but what about for the date? What happens if we want to show the date, which is:

24-Nov-2003

|

then, we should in theory be able to create a date string to reflect this format.

A way we can do is this is using the following:

date +"%d-%b-%Y"

|

where %b is a short format for month to produce Nov instead of November.

If we want to combine the date and time:

date +"%d-%b-%Y %l:%M %p"

|

This would give us the full date and time report:

24-Nov-2003 3:04 PM

|

There are a lot of other parameters that you can use within the date command. You can view these by looking at the relevant info page with :

info date

|

We're going to use this command in our script, because in almost every script that you will write you are going to want to know what time the script started, what time the script ended, when it did a particular job within the script, etc.

Using the info command for assistance, format the output of date, so that it resembles the following: Today's date is Tuesday, 27 January 2004. The time is currently 11h 32m and 49s.

Show the date in Julian format.

What day of the year is today?

Include in the output of the date, the time zone and the AM/PM indicator

Given the number 1075200287, determine what date, day and time this represents.

The final command I want to describe is a command used to send output to the screen: echo.

We've seen so far that we were able to run commands but, as yet, we don't know how to simply output some text to the screen. We may want to print a string to the screen, prior to printing the date.

Something such as:

Today's date is:

24-Nov-2003 3:04 PM

|

We would need some way of echoing that to the screen, wouldn't we?

In order to do this, there is the echo command. echo can be a bit of a nasty gremlin because there are at least two echo commands on your system. One is a shell built-in, and one is an external command and therefore it can be a bit tricky.

We're going to start off with a vanilla case. Later on we will see how we can choose which echo command we want to use.

So by way of an example, we'll use it to format the date command.

echo "Today's date is: "

date +"%d-%b-%Y %l:%M %p"

|

This would be a good time to show you how to create your first shell script. We're going to edit a file and for this you can use any editor[1]

Open your editor (whatever editor you prefer) and put the following commands in the first lines of the file:

echo "Today's date is: "

date +"%d-%b-%Y %l:%M %p"

|

Save that file as myfirstscript and exit your editor.

You've just created your first shell script. Great! How easy was that? How do you run it to make it actually do its job?

Linux has three sets of permissions that set the permission mode of the file . One for the owner, one for the group and one for everyone else (i.e. Everyone that is not the owner, and is not part of the group to which the file belongs). You would have covered this in an earlier course (Linux Fundamentals). The mode of the file will also determine whether the file is executable or not.

Thus, to make the file executable, the mode of the file must have the execute (x) permissions set.

Note that this differs from Microsoft Windows which looks at the extension to decide the type of the file. Windows assumes that .com and .exe are executable files. Notice too, that myfirstscript does not have an extension. Is that valid? Sure it is. This is Linux after all!!

In sum, to make our script executable we must change the mode of the file. How? Using chmod (change mode) command as follows:

chmod +x myfirstscript

|

This script is now executable. Does this mean that we've executed it? No, not yet. We execute it with:

./myfirstscript

|

and that should output:

Today's date is:

24-Nov-2003 3:04 PM

|

Finally, you will notice that in order to execute this command, I preceded it with a "./".

Try running the script without the "./". What happens?

What the "./" does is that the shell FIRST looks in they current directory (.) for the script before trying to look in the PATH. We will cover this is a little more detail later.

Of course, you could add the script to a new directory ~/bin (in my case /home/hamish/bin). Since this directory is in my PATH environment, the script will be "found" and will execute even without using "./"

Write a simple script to print "Hello <YOUR USER NAME>" on the screen.

Modify your scripts to additionally output the directory you are currently in.

Write a simple menu system, with the menu options as follows(note: this menu does not have to respond to the user's input at this stage):

0. Exit

1. Output a text version

2. Output an HTML version

3. Print help

4. Provide a shell prompt

Select an option [1-4] or 0 to exit:

Include the current date and time in the top right hand corner of your menu

Ensure that your menu system (I'm assuming you called it menu.sh) can execute. Execute it.

Challenge sequence:

Consult the appendixAppendix B and teach yourself the dialog package.

There are many examples in /usr/share/doc/dialog-xxx.yyy.

Once you understand this package, modify your menu system in 3 above to use the ncurses library (dialog) to spruce it up.

This section is going to cover file commands. File commands are commands such as ls (list).

Notice again, how the laziness of the Unix people comes to the fore. They could have had a command called list, but that would have required two more characters (and two more carpals - fingers!) and clearly that was a lot more effort, so we just have the ls command. The ls command shows us a listing of files in a particular directory.

This is an appropriate place to take a detour on our tour de scripting and have a look at file matching and wildcard matching. It may be something that you're familiar with, but let's have a look at it and come back to ls in a moment.

Wildcard matching allows us to match a number of files using a combination of characters. What are those characters?

Table 1.1. Wildcards

| Symbol | Name | Purpose |

| * | Splat | matches 0 or more of any character |

| ? | question mark | matches 0 or 1 character |

| [ ] | square brackets | matches one from a range of characters |

| ! | bang | invert the pattern |

So if we typed

ls *

|

we end up listing 0 or more of any characters. So it would match any filename of any length because any filename would have a minimum of a single character. The splat matches 0 or more characters following each other.

The question mark will match a single instance of any character. Later, when we study regular expressions, the full stop ( . ) matches any single character. Given the expression:

ls -la ab?a

|

this would match the files:

abba

ab1a

ab_a

ab9a

abca

...

|

What range of characters do [] include? Well we may say something like:

[abc]

|

which would match any (single character) within the following range: a or b or c but it does not match abc since this is 3 characters long. Similarly

[ab]cde

|

would match acde or bcde but it would not match abcde. Square brackets indicate a range of alternative characters. How would we match both:

ham01

|

and

ham10

|

Simple, with:

ham[0-9][0-9]

|

as [0-9] implies that any character in the range 0 to 9 will match.

Would it also match ham9?

No, for the reason that our pattern search is expecting 2 numeric characters after the pattern ham. (two sets of square brackets are displayed)

We could also combine letters and numbers, for example:

ls [abc123]

|

which would match the files:

a

b

c

1

2

3

|

(Yes Linux and UNIX can have files with all of the above names!)

Notice when we used the square brackets [0-9], we use the range specifier, which was the dash character in the middle. This dash has nothing to do with the minus sign and means match anything in that range from 0 through to 9.

Thus, typing:

[a-z]

|

matches any character in the range from a to z.

The final pattern matching wildcard is the bang command. The exclamation mark is the inverse of what you're trying to match. If we were to look at our pattern matching, we could say:-

ls [!a-c]*

|

which, would match everything NOT starting with an "a" or a "b" or a "c", followed by anything else.

So would it match abc ?

No, because it starts with an a.

Would it match bcde?

No, because it starts with a b.

Finally would it match erst?

Yes, because q is not in the range a to c. So it would match q followed by any set of zero or more characters.

Prior to doing the following command ensure that you create a new temporary directory, and change directory to this new directory [ by doing so, it will be easier to clean up after the exercise is over. ]

I'd like you run the following command (I'm not going to explain it now, it will be covered later).

touch {planes,trains,boats,bikes}_{10,1,101,1001}.{bak,bat,zip,tar}

|

This command creates a number of files for us in one go. Typing:

ls p*

|

will show us all files that start with a 'p', followed by 0 or more characters (planes_ in our case). Similarly

ls b*

|

will show us the files boats_ and bikes_ since only these files begin with a 'b'. If we typed:

ls bik*

|

it will produce all the bikes files, while

ls b*_??.*

|

will indicate all the files that start with a 'b', followed by 0 or more characters, followed by an underscore followed by exactly 2 characters, followed by a full stop, followed by 0 or more characters. Thus only

boats_10.bak

boats_10.bat

boats_10.zip

boats_10.tar

bikes_10.bak

bikes_10.bat

bikes_10.zip

bikes_10.tar

|

I've given you some additional examples that you can try out in your own time. It would be worth your time running through these examples and ensuring that you understand everything.

Exercises:

Run the following command in a new subdirectory

touch {fred,mary,joe,frank,penny}_{williams,wells,ferreira,gammon}.{1,2,3,4,5}

|

Look at the following tu understand the different fields

touch {fred,mary,joe,frank,penny}_{williams,wells,ferreira,gammon}.{1,2,3,4,5}

------Name---------- ----------Surname------- -Category-

|

list all the people who fall into category 5

List all the people whose surnames begin with a w.

List only people in category 2, whom's surnames begin with a w, and whom's first name begins with an "f"

Move all "fred's" into a directory on their own, but exclude the "gammons"

Ensure that joe and penny wells in category 3 and 5 are deleted.

List only those people whose names have 5 characters in them.

Returning from our detour, there's a whole bunch of other file commands that we can look at. We've touched on the ls command, which gives us a listing of files and from the previous set of examples on pattern matching and wildcarding, you will have gotten an idea of how ls works.

ls , like every other Linux command can take switches. Below is a quick summary to some of the switch options.

-l show a long listing (include file name, file size, date last modified, the permissions, the mode of the file, the owner, the group of the file)

-a shows all files including {hidden} files (. and ..)

Two special hidden files are:

. this file is our current directory

.. this file is our previous or parent directory.

|

Often in your home directory, you will have full control over your . directory (i.e. your current directory) but you will most probably have absolutely no control of your .. directory (i.e. your parent directory).

We have other commands like:

cp copy files or directories

mv move files or directories

wc shows the number of lines, number of words and number of characters in a file.

wc -l show us the number of lines in our file.

nl numbers the lines in our file.

There are many system commands that we can use. We're going to start using these in our shell scripts.

Remember, a shell script is nothing more than a group of Linux commands working together to produce a new command.

In order to build our system that is going to manage our Linux machine, we're going to need to know a little bit about system commands. System commands such as:

df shows the disk free space

du shows the disk usage

fdisk shows the partitioning on our disk

iostat shows the input output status

vmstat shows the virtual memory status

free shows the amount of free memory

We will use these commands, but they are a subset of the commands available to us for monitoring our system.

For example, mostly we want to run a command to check whether our file systems are filling up. It makes no sense to have a full filesystem! For that we might use the df command

df would produce a listing of our partitions on our Linux machine and will show us which partitions are 100% full, which partitions are almost empty, which partitions are mounted, etc..

What we're going to be doing, is working our way towards building a system that will automatically show us when a partition becomes 100% full.

Or perhaps we want to build a system that shows us when it's greater than a particular threshold. So we might set a threshold of 95% full, it's no good telling us when the system has crashed that it's crashed; we want to know before the system crashes.

Several switches can be used with df such as:

df -h

|

This produces output in human readable format. If you run this command now, you should see at least one partition and that's the root partition. It should show you how much space is being used, how much is available, what the size of the partition is and what particular filesystem it's mounted on.

The df command is what we'll start working on for our practical, because it most certainly is one of the most useful commands that you're going to need to run to make sure your system is not becoming overfull.

Alternately, you could use the du (disk usage) command to show you which files are consuming the largest volume of disk space:

du - s

|

will show a summary of our current filesystems' capacity. Again, how do you get information about these commands? Using the info or man command will inform you about switches pertinent to these commands.

For example a further switch for the df command might be:

df - hT

|

which will tell us what type of filesystem we're running. It might be an extended 2, 3, or a vfat filesystem.

The "du" command, like the "df" command, has a complete set of switches unique to it and shortly we'll start looking at those in a bit more detail.

The fdisk command is used primarily to show us what partitions we have on a particular disk.

BlackBeauty:/install # fdisk -l

Disk /dev/hda: 10.0 GB, 10056130560 bytes

240 heads, 63 sectors/track, 1299 cylinders

Units = cylinders of 15120 * 512 = 7741440 bytes

Device Boot Start End Blocks Id System

/dev/hda1 * 1 760 5745568+ 83 Linux

/dev/hda2 761 1299 4074840 5 Extended

/dev/hda5 761 827 506488+ 82 Linux swap

/dev/hda6 828 1299 3568288+ 83 Linux

|

It should show you what your partitions are.

If you execute the above command as a user (in other words, your prompt is a dollar) you're not going to be able to see what your partitions are set to. Why? Because only the superuser (root) has permission to look at the disk. In order to run this command, you need to be root.

baloo:/home/riaan# free

total used free shared buffers cached

Mem: 498572 493308 5264 0 48700 230492

-/+ buffers/cache: 214116 284456

Swap: 706852 8196 698656

|

This command shows the total memory, used memory, free memory, swap space, how much of our swap space has been used and how much of our swap space is still available for use.

baloo:/home/riaan# vmstat

procs -----------memory---------- ---swap-- -----io---- --system-- ----cpu----

r b swpd free buff cache si so bi bo in cs us sy id wa

0 0 3792 3508 14208 59348 0 0 32 72 1065 818 11 4 84 2

|

The vmstat command shows us how busy our system is, how many processes are running and how many are blocked. It also shows memory information: how much memory is being used by the swap daemon and what our buffers and caches are. Additionally, it shows us how many processes are being swapped into and out of memory. Finally, it shows users, system, idle and waiting time. We're going to use it later to monitor our system

Finally, the iostat command.

iostat

Linux 2.6.4-52-default (debian) 09/02/04

avg-cpu: %user %nice %sys %iowait %idle

2.51 0.03 1.99 0.82 94.64

Device: tps Blk_read/s Blk_wrtn/s Blk_read Blk_wrtn

fd0 0.00 0.00 0.00 4 0

hda 3.93 304.75 23.83 3868650 302512

hdd 0.01 0.59 0.00 7524 0

|

This command gives you information about input and output on your system, and how well (or otherwise) it is performing.

We'll take a closer look at the system performance commands in more detail later.

![[Note]](../images/admon/note.png) | Note |

|---|---|

In order to provide you with further information on the performance of your system, you should install the sysstat package. rpm -ivh sysstat.x.y.z-r1.rpm (RedHat system) (see the appendix for other distributions) The iostat command is part of the sysstat package, so if you don't install sysstat, then skip the iostat stuff) | |

Write a simple script to display the free/used disk space on your machine

Additionally, show the status of the memory and allow the vmstat commands to produce 3 iterations.

Using the iostat command, write a script that will illustrate how busy you machine is

Linux is built being able to run instructions from the command line using switches to create the output.

The question of course is how do we make use of that?

One of the ways to make use of this is by using the three special file descriptors - stdin, stdout and stderr.

Under normal circumstances every Linux program has three streams opened when it starts; one for input; one for output; and one for printing diagnostic or error messages. These are typically attached to the user's terminal (see man tty(4)) but might instead refer to files or other devices, depending on what the parent process chose to set up. | ||

| --Taken from the BSD Library functions manual - STDIN(3) | ||

Table 1.2. Standard Input, Standard Output and Standard Error

| Type | Symbol |

| standard input | 0< |

| standard output | 1> |

| standard error | 2> |

Generally standard input, referred to as "stdin", comes from the keyboard.

When you type stuff, you're typing it on stdin (a standard input terminal). A standard input device, which is usually the keyboard, but Linux also allows you take standard input from a file.

For example:

cat < myfirstscript

|

This would tell cat to take input from the file myfirstscript instead of from the keyboard (This is of course the same as: cat myfirstscript).

Essentially what this boils down to is that the input for this command (cat) is no longer coming from where it was expecting to come from (the keyboard), but is now coming from a file.

Linux associates the file descriptor 0 with standard input. So, we could have said:

cat 0< myfirstscript

|

which would have produced the same as the previous command.

Why could we leave out the 0?

Since, at the time of creating a process, one standard input is associated with the process.

Standard output, as created at process creating time, goes to the console, your terminal or an X terminal. Exactly where output is sent clearly depends on where the process originated.

Our console or terminal should be the device that is accepting the output. Running the command:

cat file

|

would [con]catenate the file, by default, to our standard output i.e. our console or terminal screen. (Where the process originated.)

We can change this and redirect the output to a file.

Try the following:

ls -al myfirstscript > longlisting

|

This redirects the output not to the screen, but rather to a new file 'longlisting'. The process of redirection using the '>' will create the file 'longlisting' if it was not there. Alternately, if the file 'longlisting' existed, it would remove it, (removing the contents too of course) and put a new file there with the directory listing of "myfirstscript" within it.

How would we see the contents of the file?

cat longlisting

|

This will show the size, the owner, the group and the name of the file myfirstscript inside the file 'longlisting'.

In this example, the output of the ls command has not gone to the standard output (the screen by default), but rather into a file called 'longlisting'.

Linux has got a file descriptor for standard output, which is 1 (similar to the 0 for standard input file descriptor).

The above ls -la example can be rewritten as:

ls -al myfirstscript 1> longlisting

|

which, would do the same thing as leaving out the file descriptor identifier and just using the greater than sign.

In the same way we use our standard input as < (or a 0<), we use a > (or a 1> ) to mean standard output.

Returning to the cat example above, we could type data on the command line that gets sent directly to a file. If the file is not there it will create it and will insert the content we typed on the command line, into the file. This is illustrated below:

$ cat > test

This is the first line.

This is the second line.

This is the final line. < Ctrl-d pressed here >

$ cat test

This is the first line.

This is the second line.

This is the final line.

|

Doing this does not return us to a prompt. Why? What is it waiting for?

It's waiting for us to actually enter our string into a buffer. You should start typing a sentence then another sentence, and another, etc. Each time you type a character, it's getting appended to the file 'newfile'.

When you have finished typing in what you want, press Ctrl-d. The Ctrl-d (^D) character will send an end of file signal to cat thereby returning you to your prompt.

If you list your directory contents using:

ls -al

|

you should see the file 'newfile'. This file is the one that you've just created on the command line.

cat newfile

|

will show you the contents of 'newfile' displayed onto the standard output.

Now why did all of this work? It worked because cat was taking its input from standard input and was putting its output not to standard out as normal, but was rather redirecting output to the file 'newfile'.

On typing the command and hitting enter, you are not returned to your prompt since the console is expecting input to come from stdin; you type line after line of standard input followed by ^D. The ^D stopped the input, by sending an end-of-file character to the file, hence the file 'newfile' is created.

Question: What do you think tac > newFile will do?

If you decide you want to copy the contents of two files to another file (instead of using the cp command - there is more than one way to skin a cat in Linux) you could do the following:

cat < myfirstscript > mynewscript

|

Incidentally, this is equivalent to

cp myfirstscript mynewscript

|

Well that's fine and dandy, but what happens if we don't want to delete our longlisting script and want to rather append it to a file that's already there.

Initially we typed:

ls -al myfirstscript > longlisting

|

If you did this a second time, it would overwrite the first file longlisting. How could you append to it? Simply adding two greater than signs, immediately following one another as in the example below, would append output to the file 'longlisting'

ls -al myfirstscript >> longlisting

|

Each time you ran this command, it would not clobber (remove the contents of) the longlisting file but would rather append to it and as a result, the file 'longlisting' would grow in size.

A final note on standard output and standard input is that redirection must be the final command that you execute on the command line. In other words, you can't do any other command after the redirection. We will talk about this in a later section on pipes.

The final component in this dialog of file descriptors is standard error.

Every command could send it's output to one of two places: a) it could be valid output or b) it could be an error message.

It does the same with the errors as it does with the standard output; it sends them directly to your terminal screen.

If you run the command (as your user):

find / -name "*" -print

|

you would find that find would find a load of things, but it would also report a lot of errors in the form of 'Permissions denied. . .'.

Perhaps we're not interested in 'Permission denied...' - we may wish to discard these messages (as root, no error messages would be returned).

If we ran the command, we could put standard error into a file (remember standard error by default is going to the console; the same place as stdout). In this case I'm going to put it into a special file on the Linux system called /dev/null.

/dev/null is similar to the "Recycle Bin" under Windows except it's a waste paper basket with a point of no return - the Linux black hole! Once information has gone into /dev/null, it's gone forever.

Initially, I'm going to put any errors that the find command generates into /dev/null, because I'm not interested in them.

We saw that standard input was file descriptor 0, the standard output was file descriptor was 1, so no prizes for guessing that standard error has file descriptor 2.

Thus, the command

find / -name "*" -print 2> /dev/null

|

discards any errors that are generated by the find command. They're not going to pollute our console with all sorts of stuff that we're not interested in.

![[Note]](../images/admon/note.png) | Note |

|---|---|

Notice there is no space between the 2 and the > | |

We could do this with any command, we could for example say:

ls -al 2> myerror

|

Which would redirect all the error messages into a file called "myerror".

To recap we can use either:

< OR 0< for standard input

> OR 1> for standard output

but for standard error I have to use 2>

|

It's not optional to leave off the number two (2). Leaving it off would mean that the standard output would go to "myerror", including a 2 means standard error.

In the listing case of:

ls -al 2> myerror

|

puts any errors into a file called 'myerror'.

If we wanted to keep all those error messages instead of using a single greater than sign, we would use double greater than signs.

By using a single greater than sign, it would clobber the file 'myerror' if it exists, no different to standard output. By using a double greater than sign, it will append to the contents of the file called myerror.

ls -al 2>> myerror

|

Thus the contents of 'myerror' would not be lost.

With our new found knowledge, let's try and do a couple of things with the find command. Using the find command, I want to completely ignore all the error messages and I want to keep any valid output in a file. This could be done with:

find / -name "*" -print 2> /dev/null > MyValidOutput

|

This discards any errors, and retains the good output in the file "MyValidOutput". The order of the redirection signs is unimportant. Irrespective of whether standard output or standard error is written first, the command will produce the correct results.

Thus, we could've rewritten that command as:

find / -name "*" -print >MyValidOutput 2>/dev/null

|

Finally I could've appended the output to existing files with:

find / -name "*" -print >> output 2>> /dev/null

|

Clearly appending to /dev/null makes no sense, but this serves to illustrate the point that output and errors can both be appended to a file.

What happens if we want to take our standard output and put it into the same file as standard error? What we can do is this:

find / -name "*" -print 2> samefile 1>&2

|

This means that standard error goes into a file called samefile and standard output goes to the same place as file descriptor 2 which is the file called samefile.

Similarly we could've combined the output by doing:

find / -name "*" -print 1> file 2>&1

|

This captures the output of both standard output and standard error into the same file.

Clearly, we could've appended to a particular file instead of overwriting it.

find / -name "*" -print 1>> file 2>>&1

|

Using the simple scripts from the previous exercises, ensure that all output from the df, du, vmstat, iostat commands are written to a file for later use.

Write a script to run the vmstat command every 10 seconds, writing output to a file /tmp/vmoutput. Ensure that the existing file is never clobbered.

Propend the date to the beginning of the output file created by the script in question 2 above. (put the date on the front - or top - of the file)

Up to now, we've seen that we can run any command and we can redirect its output into a particular file using our redirection characters (>, <, >>, 2> or 2>>).

It would be good if we could redirect the output of one command into the input of another. Potentially we may want the output of the next command to become the input of yet another command and so forth. We could repeat this process over and over until we achieve the desired output.

In Linux, this is affected using the pipe character, (which is a vertical bar '|'). An example is:

ls -la | less

|

This would pass the output of the ls -al command to the input of the less command.

The effect would be to page your output one page at a time, rather than scrolling it to the standard output all in one go - too fast for you to be able to read, unless of course you are Steve Austin!.

What makes the pipe command powerful in Linux, is that you can use it over and over again.

We could type:

ls -l | grep myfirstfile | less

|

Instead of grep's standard input coming from a file (or keyboard) it now comes from the ls command. The grep command is searching for a pattern (not a string) that matches myfirstfile. The output of the grep command becomes the input of the less command. Less could take its input from a keyboard, or from a file, but in this case it's taken its input from the command grep.

How many of these pipes can we have on a single line? As many as we want! We will see this and how it's used to good effect in our shell scripts from the next chapter onwards.

If pipes were not available, we may have to archive the above command in two or more steps. However, with pipes, this task is simplified (speeded up).

If we take a step back to run the who command:

who | grep <your user name>

|

![[Note]](../images/admon/note.png) | Note |

|---|---|

The < and > here don't mean redirection! | |

We will see only the people that are logged on as your user (hopefully that is only you!!). Perhaps you want to only see people who are logged on to pseudo terminals, then:

who | grep pts

|

which would tell us only the usernames of the people logged on to pseudo terminals.

In these examples we are using the output of the who command as the input to the grep command.

Additionally we could redirect the output of this command to a file called outfile:

who | grep pts > outfile

|

This would produce the file 'outfile' containing only those users who have logged onto pseudo terminals. That's nifty.

We will see this put to good effect as we start scripting, building very complex filters where we use the output of one command to be the input of the next, and the output of that to be the input of the next.

[1] If you haven't been taught vi, an excellent place to start is using vimtutor. Type vimtutor on the command line to begin the lessons. It only takes 45 minutes, but is well worth the effort. vi is the best editor in the world, in my humble opinion Almost everyone in the UNIX/Linux world has some relationship with it - a love-hate relationship: some people love it, some people hate it. But if you're going to learn any editor, learn vi. Since it is the de-facto editor on almost any UNIX/Linux variant in the market today, learning the basics at least should stand you in good stead in you years as a system administrator.

Table of Contents

In order to work with the shell, you will need to be logged in as your user on your system.

What is the shell? The shell is a command interpreter, which I'll talk about in a minute. In essence, there are two types of shells:

the login shell and

the non-login shell

The login shell's responsibility is to log you into the system. This is the shell you get when you're prompted with your username and you have to enter your password. The second type of shell is a non-login shell. This is the shell we're going to be programming in, which in my case, is bash, but could also be the sh, csh , the ksh, or another shell.

There are many non-login shells. We're not going to be concentrating on all the non-login shell as there are most probably 50 different shells that one could use. In order to understand the shell, we need to understand a little more about how the shell starts up.

I'm going to explain the start-up process briefly in order to convey an idea of where your login settings are. For a full comprehensive description of these files, consult the system administration course in this series.

Throughout this course, we'll use bash, primarily because that's the shell that you're probably going to have as your non-login shell on most Linux systems. How do you find out what shell you're using? Well the easiest way to do this, is:

echo $0

|

$0 will return "bash" if you're using the Bourne Again shell, or "sh" if you're using the Bourne shell, "ksh" if you're using the korn shell or "csh" if you're using the C shell or the tcsh.

Once you've established what shell you're using, you know what command interpreter set you're going to be using when creating shell scripts.

The login shells' responsibility is to start the non-login shell and to make sure that your environment variables are set so as to ensure that you can get all the default parameters needed at start-up.

Your login shell will set the PATH environment variable, TERM, the UID and GID of the terminal amongst other things. These are the essential requirements in order to work efficiently. Environmental variables will be covered in detail later. Additionally, the login-shell will set default variable such as USERNAME, HISTSIZE, HOSTNAME, HOME, etc.

Upon start-up, your login shell consults two sets of files:

users', as well as the system-wide login shell initialisation files also known as the profile files

users', as well as the system-wide non-login shell initialisation files commonly referred to as 'shell rc' files.

System-wide profile and shell rc initialisation files reside in the /etc directory, and are owned by root.

System-wide initialisation and profile files:

/etc/profile

/etc/bashrc

|

Users' profile and shell rc files are owned by the specific user, reside in their home directories and are hidden. [2]

~/.bash_profile

~/.bashrc

|

The profile files contain the initialisation data used at login time, thus:

/etc/profile

~/.bash_profile

|

are used by the login shell.

The non-login shell (bash in my case) files are:

/etc/bashrc

~/.bashrc

|

which are run in order to set up variables and other necessaries at shell initialisation.

There are many things you can achieve using these files. For example you can initialise parameters, you can set your PATH, you can set what your prompt looks like, and much more.

Using these files, you can set up your entire environment. Obviously because you, are the owner of your ~/.bash_profile and ~/.bashrc, you have full control to make changes to these files.

Only the root user can change the /etc/profile and /etc/bashrc.

The appendix Appendix C details the differences between bash, tcsh and ksh.

SUMMARY: At login time, your login shell consults /etc/profile which is owned by root, your home ~/.bash_profile which is owned by yourself, the /etc/bashrc which is owned by root and your home ~/.bashrc which is owned by yourself.

Each time a new shell is started, it executes the /etc/bashrc and ~/.bashrc.

Notice that starting a new shell without logging out and in again (a child process) means that the shell has no need to run the profile files again.

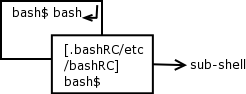

At your command prompt type:

bash

|

This will start a second shell. Looking at the diagram you can see that from the original shell, we've started a new shell. Is that original shell gone? Absolutely not. All you have done is to run a command that just happens to be a new shell. The original shell is still running and so is the new shell.

Again we could run bash at the prompt which would start yet another shell. Each time we run bash, it's consulting /etc/bashrc and ~/.bashrc using these files to initialising the shell. So how do we get back to our original shell? Well we could type:

exit

|

This will return us to the previous shell. Within this shell, we can type exit again which will return us to our original shell. If we type exit one more time, it will return us to our login prompt.

Understanding the distinction between your profile files and your shell rc files is important because when you start to program in the shell you need to know where to set variables in order that they be propagated from shell to shell correctly.

![[Note]](../images/admon/note.png) | Note |

|---|---|

A common thread in Linux is that initialisation files are frequently named by including an 'rc' in the name. The editor, improved vi or vim, uses an initialisation file called .vimrc. The run level initialisation directories are called rc0, rc1, rc2, etc. Hence the name shell rc files, since it's bashrc (for bash) or kshrc (for ksh) of cshrc (for tcsh) | |

These exercises should assist in your understanding of the login and non-login shell initialisation files.

Log in as a user, edit your .bash_profile. Add a variable called MYFULLNAME as follows:

MYFULLNAME="Hamish B Whittal"

Save the file and logout.

Now login again.

Type the following at the prompt:

echo $MYFULLNAME

What happens? Why?

Now type bash again. In the new shell that open type the following:

echo $MYFULLNAME

What happens? Why?

Start another "bash" shell

In it type;

echo $MYFULLNAME

What happens? Why?

Edit your .bashrc, adding this:

MY_FAV_MOVIE="Finding Nemo"

Save the file and log out, log in again then type the following at your prompt:

echo $MY_FAV_MOVIE echo $MYFULLNAMEWhat happens? Why?

Type bash;

echo $MY_FAV_MOVIE echo $MYFULLNAMEWhat happens? Why?

Type bash;

echo $MY_FAV_MOVIE echo $MYFULLNAMEWhat happens? Why?

Can you explain what is happening in these examples and why?

Exit back to your original prompt.

We need to have a quick look at what the shell actually does. The main functions of the shell are described below.

The shell interprets commands. A command typed in at the prompt will know how to be executed because the shell will use its PATH to search for the command.

Typing cd, the command interpreter knows this is a built-in command , and will not search for it in the PATH.

Typing pwd, it understands that it needs to display the local working directory.

Using mv, the shell must know to run an external program, as this is not a shell built-in. [3].

Equally the shell is responsible for parsing the command line to detect errors in syntax. For instance typing

cd..

|

produces an error because there is no white space between the cd and the .. (this is a problem ex-DOS people often stumble over). In this instance the command interpreter would typically give you feedback such as

cd..

bash:cd..: command not found

|

The shell allows variables to be set. Variables such as your PATH variable, or your input field separator (IFS), or setting your shell HISTORY size.

The shell is also responsible for input, output and error redirection (consult the previous chapter to refresh your memory on IO redirection).

The final job of the shell is to allow you, a user, to customise your environment. Setting variables, changing your prompt, running scripts automatically are all things that allow the user some control over their environment.

In summary, a shell script is like a batch file in DOS. Unlike the DOS command interpreter, the shell incorporates a powerful, almost full programming environment.

For instance, it allows 'if' statements, 'while' loops, 'for' loops, 'arrays', as well as a host of other programming techniques.

Scripting will make you life easier as a system administrator.

Table of Contents

- Introduction

- What are regular expressions?

- The fullstop

- Let's explore "sed" syntax

- Square brackets ( [ ] ), the caret ( ^ ) and the dollar ( $ )

- Using sed and pipes

- The splat (asterisk) ( * )

- The plus operator ( + )

- Returning from detour to our discussion on curly braces …

- RE's are greedy for matching patterns

- Placeholders and word boundaries

- Word boundaries ( < and > ) - a formal explanation

- The tr command

- The cut command

- The paste command

- The uniq command

- The Sort command

- The grep command

One of the most important (and powerful) parts of UNIX and Linux, and one that is often overlooked, is the concept of regular expressions.

Regular expressions could change your life (in the Linux world!!!) and if you don't know how to use them you will find yourself handicapped.

This chapter will not only teach you about regular expressions it will also teach how and when to use them.

First log in using your username.

Then you need files containing data. Go to the site and retrieve the file bazaar.txt. This is an extract from Eric S Raymond's book, "The Cathedral and the Bazaar" (the team highly recommends this book as per the preface to this course). All files you download from the site should be placed in your user area. Use the 'less' command to browse the file to familiarise yourself with it's contents. Repeat the process for the files:

emails1.txt emails2.txt emails3.txt columns.txt telnum.txtThirdly, we need a program within Linux that uses regular expressions. For this we're going to use a program called sed. sed is one of those many "Swiss army knives" that you're going to find in Linux.

![[Note]](../images/admon/note.png) | Note |

|---|---|

Some of the other tools that you will use regularly are: find, awk, perl and grep. | |

A regular expression is a pattern matching tool. The pattern can comprise alphanumeric and non-alphanumeric characters, numbers, letters and digits.

Patterns can be used to match certain sequences of characters - almost like a shape and sort. OK, but what does this actually mean?

When doing file manipulations in an earlier chapter, we used patterns. For example, the splat ( * ) is a pattern that matches 0 or more characters. Thus:

ls *

|

matched filenames of 0 or more characters.

The splat is a pattern matching either none (0) characters, or 1 character, or 1 character followed by another character (i.e. 2 characters) or 1 character followed by another and another (i.e. 3 characters) etc., irrespective of what those characters are.

Thus, we've had a glimpse of patterns previously, however RE patterns are much more versatile (and complex) and we want to look at them in detail.

The first of our pattern sequences: a fullstop (or a period as the Americans call it) matches any character.

We might say:

sed 's/Linu./LINUX/g' bazaar.txt

|

This sed expression means:

s (search for) / Linu. / (replace with) LINUX / g (globally) <filename to search>

----------^-------^-----------------^---------

|

Looking at the command in detail: The pattern 'Linu.' says match any set of characters that begins with a uppercase 'l', followed by i, an 'n' and a 'u' followed by any other character - the fullstop matches the "any other character". In the file bazaar.txt the following strings appear, that would match this pattern:

Linus

Linux

|

The pattern we used in the sed above, will match occurrences of Linux and Linus.

Using the fullstop in place of 's' or 'x' ensures that these two strings are matched. However, the pattern will also match:

Linup

|

Why? Because it matches the pattern 'Linu' followed by any single character.

![[Important]](../images/admon/important.png) | Important |

|---|---|

The fullstop in regular expression terms matches any character. | |

Pipe the sed expression through nl, and look at line 9 ... "Linus Torvalds" has been changed to "LINUX Torvalds".

sed 's/Linu./LINUX/g' bazaar.txt | nl

|

Sed is an acronym for "Stream Editor". The "stream", in our example above, comes from the file bazaar.txt.

Besides the input stream sed must also have a command pattern-command combination.

SYNTAX:

sed [command] / pattern / [replace sequence] / [modifier] [command]

|

In this case our command is 's' for search while the pattern we're searching for is enclosed in forward slashes (forward slashes are not strictly required, but we'll not going to complicate matters right now).After the second forward slash, we have a replace sequence.

sed will search for a pattern (Linu.) and on finding it, will replace it with the replace sequence (LINUX).

Finally we have a modifier in this case a 'g' meaning "globally" i.e. search for a pattern and replace it as many times as you find it on a line. If there were 10 instances of 'Linu<any character>' on a line, it would replace all occurrences.

Since sed is a stream editor, it considers each line in the file bazaar.txt independently (in essence, "finish processing this line, then get the next line from the input file"). The stream ends when an end-of-file character is reached. Thus the "globally" modifier only operates on the current line under consideration.

If we just wanted to replace only the second instance and not the first or the third, etc. we could replace the g with a 2. sed would then only replace the second instance of the desired pattern. As you go through this chapter, you will become friendly with sed, and work with many patterns.

To Summarise: a fullstop (.) as a regular expression matches any single character.

Using sed and the bazaar.txt file, write regular expressions to match the following:

Any word containing the letters "inu" in order. Thus, your RE should match Linux , Linus, linux and linus.

Match only 5 letter words.

Write a RE to match only words with an even number of letters up to a maximum of 10 letters.

Replace all the words 'the' with the word "ETH" in the file bazaar.txt